Life In The Tinderbox

I hesitate to write anything about global warming.

Two reasons, really:

- I don’t know very much about global warming. I can’t criticise a paper on methane seeps or the La Niña effect very well, because I can barely read them. It’s basically an area of applied mathematics. I don’t get it.

- It attracts The Unholy Bugshit. Self-explanatory. Mention anything about the climate on the internet, and baboons descend. I don’t have time to do the stuff I have time to do, and so arguing with someone called “KENNY MAGA #420 #SQUADLYF" about satellite measurements of ground temperature is lower on my To-Do list than doing my own dental work with a gutter adze.

However, today I’ll make an exception — mainly because the paper in question is related to global warming without actually studying the phenomenon itself. It is instead a study of what people think about global warming.

And what they think — much like the North Sea these days — is oddly lukewarm.

You’ll see.

The study on the table is “Dead indoor plants strengthen belief in global warming” by Nicolas Guéguen. doi: 10.1016/j.jenvp.2011.12.002. It’s from Journal of Environmental Psychology, published in 2012. It has 15 citations to date, which isn’t a lot — but considering the topic matter, it’s not surprising where it’s ended up. The New York Times, for instance.

(This is from an ongoing series on Dr. Guéguen’s work I’m doing with Nick Brown. Stay tuned for more.)

The premise of the paper is simple: put people in a room with a dead plant and they’ll endorse statements about global warming more strongly than if the plant were alive.

In essence, participants are stuck in an unaccountably small room (3 x 1.5 m) and given a questionnaire with global warming-centric questions sprinkled through it. Half of them are accompanied by a 5ft healthy potted plant (which no-one thought was weird at all, apparently), and the other half with a 5ft dead potted plant (which is, let’s face it, even weirder).

Oh, and how dead was said plant? Well, the author felt the need to test it. Apparently, people rate a dead plant as being dead, and an alive one as being alive. Who knew? Why the hell anyone would need a manipulation check to see if a plant which is just decorative twigs is dead, well, that’s totally beyond me. Thus, we have a Leaves condition and a Twigs condition. But I digress.

Beyond that, nothing comes hurtling out as being stunningly incorrect… not like, say, this paper which gave three different cell sizes for the same group of people.

But there is one obvious screw-up, and one red flag.

The screw-up: the t-tests have degrees of freedom listed as 28. It should be 58. A minor error, yes, but also a super-obvious one which means we should take a closer look at the table it’s in.

The red flag: the effect sizes are massive. The overall Cohen’s d for global warming beliefs in Leaves vs. Twigs is d=1.54, the individual item effect sizes are similar.

This is possible, technically. It’s just a shockingly strong effect to occur from plant-based unconscious priming. This is more like the effect size you get when you compare a group of people you’ve just hit in the head with a frying pan to a control group who receive a small box of chocolates and a sincere compliment. Ask them “On a scale from 1 to 7, how much does your head hurt?” and you’ll get d=1.54. It’s a big difference.

Yeah, we should really take a closer look at that table.

And that table has…

Goddamn 1 d.p. Values

They’re the bane of my life.

When we reverse-engineer statistics without the underlying data, values are often reported to one decimal place. It might look neat in the paper, but if you’re interested in the guts of how the study works, 1 d.p. really is a holy pain in the etceteras. I’d ban them if I could.

The problem is simple: 1 d.p. values have so little precision that we have to do a pile of trickery to get reasonable underlying parameters.

The first pair of means reported in the paper are Likert-style values collected in n=30 people, measured from 1 to 7. The question is:

“I have already noticed some signs of global warming”

The Leaves: mean=5.3, SD=0.7, n=30.

The Twigs: mean=5.9, SD=0.5, n=30

We need to try to improve these estimates. Now, because of the precision involved, this means the true values are somewhere between 0.05 of a unit up or down. In other words, the maximum difference between groups occurs with the smallest mean rounded down, the largest mean rounded up, and both SDs rounded down. The minimum is the converse.

But wait!

The values of the mean can’t be anything you like, they have to be in 30ths. We are measuring people’s scores in whole numbers, and there are 30 people. Their sum of scores is in whole numbers, and therefore the mean is in 30ths.

In other words, for the Leaves conditions, there are only THREE possible means which round to 5.3 — they are 5.2667, 5.3 exactly, or 5.3333. No other means are possible.

The Twigs condition is similar; rounding to 5.9, the potential mean values are 5.8667, 5.9 exactly, or 5.9333.

(Note: this observation is the little-recognised-but-still-pretty-cool foster child of the GRIM and GRIMMER tests, called GRIMMEST. It’s Jordan Anaya’s idea.)

In other words, we can construct a window of what the results might be. I’ll italicise values that are reduced, and bold values that are increased.

So, the max difference case:

The Leaves: mean=5.2667, SD=0.65, n=30

The Twigs: mean=5.9333, SD=0.45, n=30

… and the min difference case:

The Leaves: mean=5.3333, SD=0.75, n=30

The Twigs: mean=5.8667, SD=0.55, n=30

This means, if we use the handy-dandy calculator at GraphPad.com, the true t-value is between t = 4.62 (max) and t = 3.14 (min). Hell of a range, isn’t it? That’s 1 d.p. for you. The reported mean is possible as it’s within this range: t=4.07. A massive difference, but it’s *technically* possible.

Two further points:

- If we put in just the straight values from the paper, we get t=3.82, which is very definitely not t=4.07. This is not necessarily an error even if it was entered as 1 d.p. data, as software will often not tell you what t-test assumptions it uses at exactly n=30, and that point is important (Central Limit Theorem represent). We have no idea how it was originally done, because the paper — as is so often the case in non-technical papers — doesn’t list how anything was calculated. It could be a supercomputer or a pencil.

- We don’t have a good method to find out precisely what the right values are. We could shuffle any of the above parameters a little and get the right answer, but we don’t have any anchors for the right value. The lack of data, calculatory details and 1 d.p. has defeated us completely.

Side point: how much detail of scientific endeavour is lost when we report things badly? How much more sleep could I get if open data policies were mandatory?

But we are still curious.** Let’s look at the other questions:

Q2

5.1 (0.6) vs. 5.6 (0.5), t = 3.71

Huh.

Forget about the t-values for a minute, these SDs are low.

Really, really low.

So low, in fact, that there start to become very very few possible combinations for how they might exist. Instead of making assumptions about where the SDs might end, we can simply use SPRITE to find the lowest possible SDs.

If we do so, the above becomes:

5.0667 (0.5833) vs. 5.6333 (0.4901), t=4.07

So, the range of the possible t-values does contain the reported value (which is t=2.57 to t=4.62).***

That’s a good start. It might be OK. Right?

WRONG. FAKE NEWS.

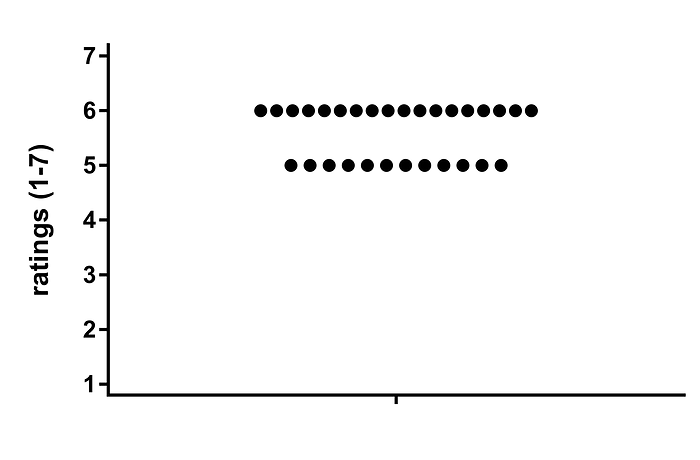

Here is what SPRITE says mean = 5.6, SD = 0.5 is:

Notice how I said ‘is’, instead of ‘might be’? SPRITE returns possible solutions… except when there’s only one possible solution. And it’s this.

The SD of this sample is so low, EVERY SINGLE PERSON put 5 or 6 on that old 7 point scale. That’s right. Everyone.

There are no 4’s. Add a 4 and the SD is 0.5632.

There are no 7’s. Add a 7 and the SD is 0.5561.

Both round, obviously, to 0.6 (1 d.p, remember?)

So, let me get this right: when a sample of socially-conscious university students are marched into a cupboard, sat down next to a twig-plant, and asked about global warming, absolutely no-one reports either being ambivalent about (4) or strongly agreeing with (7) the phrase “It seems to me that the temperature is warmer now than in previous years”?

Of course no-one is skeptical (1–3) at all either.

Every. Single. Person. 5 or 6.

Now, is it possible? Technically. I just feel like it’s very, very, very unlikely.

And I’m not done.

This scale has been administered before.

That paper is Joireman et al. (2010), doi: 10.1016/j.jenvp.2010.03.004.

This paper looks… well, at a glance, fine.

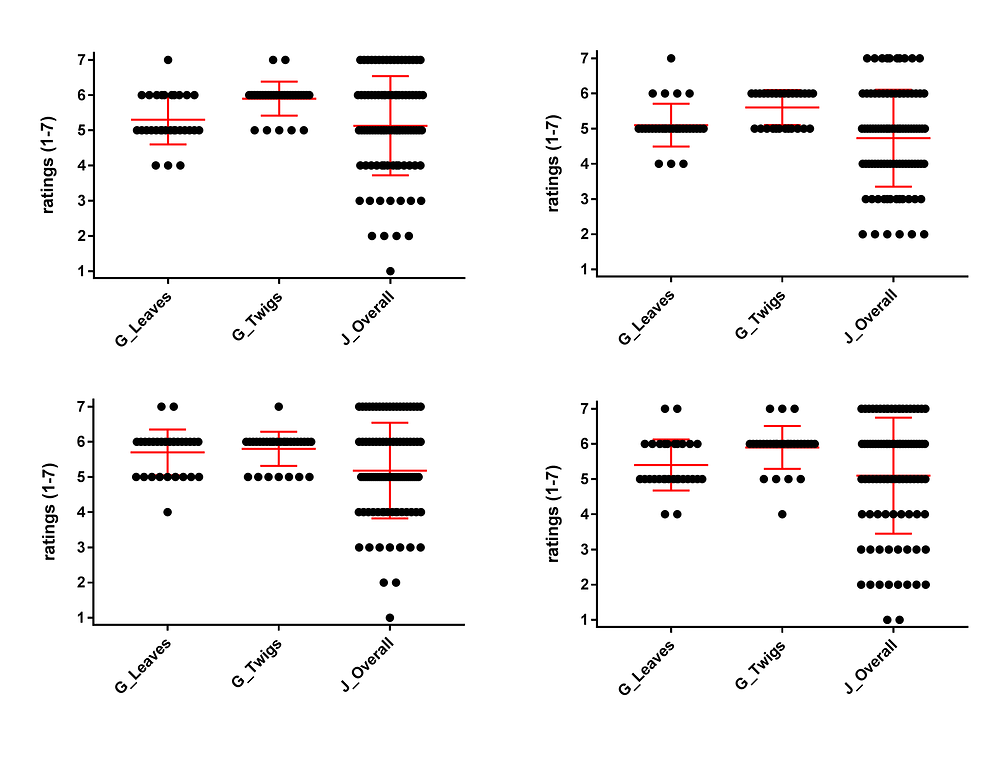

So let’s compare potential SPRITE solutions for all 8 of the mean/SD pairs given in Guéguen (2012) for these questions (G_Leaves, G_Twigs) to the original values from Joireman et al. (J_Overall):

Oh dear.

As you can see, the samples from Gueguen (2012) I’ve designated G_Leaves and G_Twigs are universally afflicted with an extraordinarily strong and persistent lukewarm agreement. No-one has any strong doubts, very few are even undecided. But at the same time, no-one has any strong endorsement either… the answer to ‘is there global warming going on?’ is a resounding and damn-near-unanimous ‘yeah, sorta, I guess, pass the shortbread’.

Naturally, other people have tried to measure this attitude (it’s important). For instance, an Ipsos poll in France reported that 53.5% of adults aged 18–24 surveyed replied “Oui, tout à fait” (“Yes, absolutely”) to a question asking if they personally thought that climate change was real.

Yet, here, when faced with the proposition “It seems to me that the temperature is warmer now than in previous years,” apparently not one participant can bring themselves to put 7 out of 7, even in the presence of ominous twigs.

Hmph.

Conclusion:

Something has gone seriously curly here. In total, we have:

- a series of extraneous and curious details (“Is this corpse dead? Rate its deadness from 1 to 7”…)

- wrong dfs…

- potentially curious t-values…

- bizarrely restricted variation in ALL samples…

- …^ which is totally at odds with both (a) existing research using the same measure, (b) other measures of the same attitude and (c) common sense

And that’s just Study 1. Study 2 is more of the same. If you’ve got the paper open, check the answer to Condition “one indoor plant with foliage”, Question 4. Again, the forces of unanimous but extremely lukewarm agreement make themselves known.

P.S. More to come. You’ll see.

* Note that this doesn’t even require us to use GRIMMER to determine if the SDs are possible. The true max t value may be lower still.

** I made a big fat stupid error in the original version of this blog post, just from using the wrong value. This is what you get for working in the middle of the night. I apologise unreservedly to everyone who read it and the author. This affects the overall conclusion a little, but not a great deal… the lack of sample variation worries me more than everything else put together. I have retained a copy of the original here if you want to see me being wrong. Thank you Jordan Anaya for pointing this out immediately, you sharp-eyed bastard.

*** Used the R-calculator for these (thank you, Nick). Still possible with other calculators.